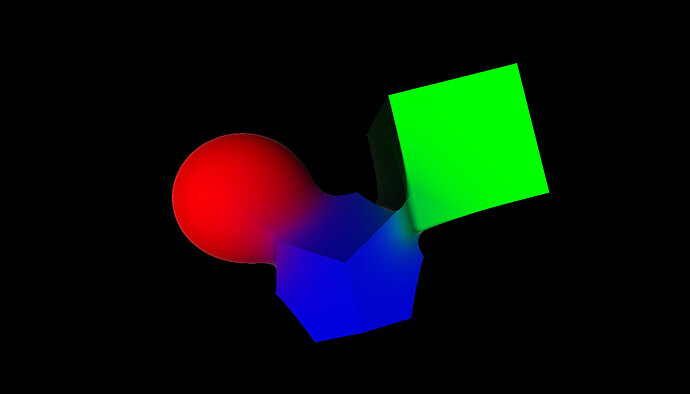

This project is a real‑time GPU raymarching experiment built with Three.js and a custom GLSL fragment shader. A full‑screen quad is rendered with a signed distance field (SDF) scene evaluated per‑pixel via sphere tracing. The scene contains three independently moving primitives—a sphere (red), a box (green), and an icosahedron (blue)—each drifting smoothly to seeded “random” X/Y positions over time. When objects overlap, both geometry and color blend smoothly using a soft‑minimum (log‑sum‑exp) distance combine, producing liquid-like merges instead of hard cutoffs. Shading is based on surface normals derived from SDF gradients, with a diffuse light term and an added Fresnel rim highlight to emphasize silhouettes. The camera uses aspect‑correct screen coordinates suitable for raymarching, and object rotation is applied as an inverse transform to keep the distance estimate stable.

This is really interesting stuff, feels like the kind of low-level graphics experimentation that turns into a browser mini game over time.

I feel that you are getting closer and closer to the point where you’re building mini games without even trying.

1 Like

Currently, I’m experiencing a lot of learning, because the shader world has no end—it just keeps going, it keeps my brain engaged, I noticed that if I do things that I already know or understand, I get bored fast… There are developers who have been doing this for more than 40 years, and there are still concepts they don’t even know exist.

What’s interesting about this project is that it’s pure math. Those shapes and colors are 100% mathematical computations on the GPU. It’s crazy to think about.

1 Like